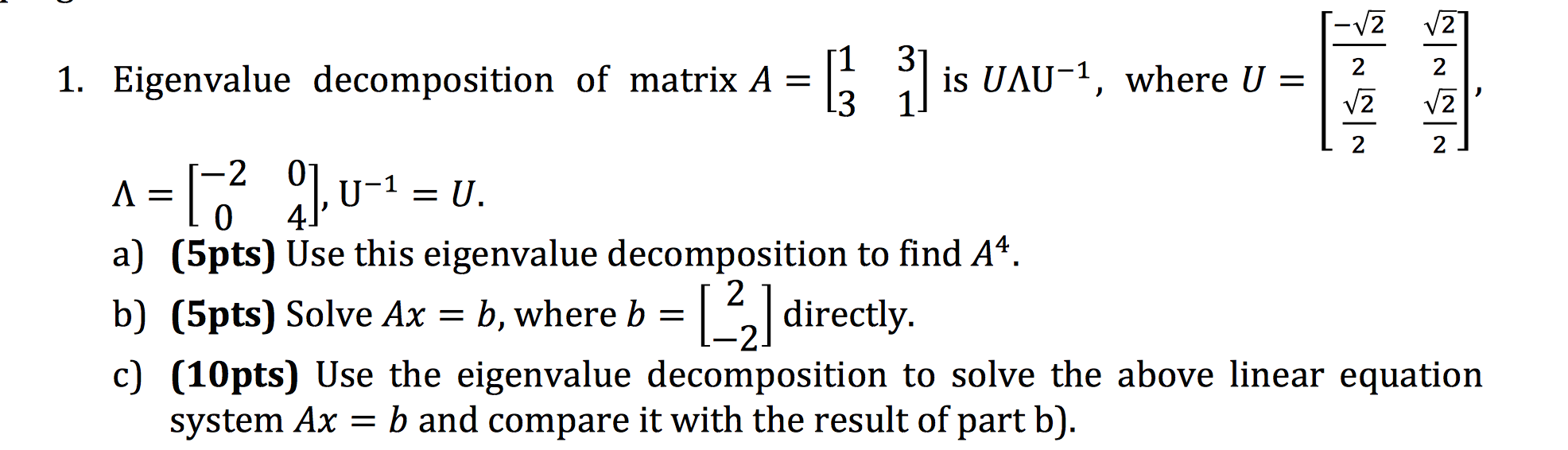

SVD performs a decomposition based on the spatial structure of a matrix (image) whereas a spectral filters look at its frequency components. The similarity between both techniques stops there as they operate over different domains. In this sense both SVD and image filtering perform a decomposition on images based on a change of basis. Image filtering (in the frequency domain) is performed by decomposing an image in its frequency components and removing part of the spectrum. Do the smaller eigenvalues contribute to high-frequency components of the signal? So is the compression algorithm acting like a low pass filter and depending on the threshold set is essentially stopping the high frequency signals to pass through and basically acting as a smoothing oiperator or is there no relationship there? The main question that I have and this relates to eigenvalue decomposition as well as SVD is whether there is some relationship to the frequency content of the signal. In this case, the smaller eigenvalues will have a relatively shrinking effect on the rows of $V^$ and will overall contribute less. So, I can perform compression using eigenvalue decomposition by setting the eigenvalues under some threshold to 0. Where $V$ is the matrix where each column corresponds to an eigenvector of $A$ and $D$ is the diagonal matrix where the diagonal entry corresponds to the corresponding eigenvector.

So, the eigenvalue decomposition of a square matrix can be written as:

So, before we discuss SVD, I want to check if my understanding of eigenvalue decomposition is correct. Now set the right hand singular vectors of R to TU with the signs set up so S=LT.I have been studying the SVD algorithm recently and I can understand how it might be used for compression but I am trying to figure out if there is a perspective of SVD where it can be seen as a low pass filter. Note that UT is NOT a valid column set of eigenvectors because UT (TU)'= UT U' T' ~=I. This follows trivially ala' brute force from TU (TU)'= T U U'T'=I. If U is a column of the eigenvectors of R then so is TU. So now that implies in turn that the column of left hand singular vectors EQUALS the eigenvectors of R (since the SVD states that R = V S W' where S is diagonal and positive and since the eigenvalues of R-min(L) are non-negative we invoke the implicit function theorem and are done). This follows trivially from the fact that symmetric matrices satisfy their own characteristic function (Cayle Hamilton theorem). If R=U L U', UU'=I, L=diag(lamda_i,i=1.,N), is the eigendecomposition of an N by N symmetric matrix R, sign indefinite, then R shares the same eigenvectors as R-min(L). Theorem : A symmetric matrix has the properties that its' eigenvectors and left hand singular vectors are the same and the right hand singular vectors also equal the eigenvectors to within a diagonal matrix product with matrix T, whose diagonal entries are +-1 corresponding to the parity of the eigenvalues of R. I provide in turn an answer in the form of a proof. This question is more nuanced than I believe the previous discussions have embraced. Here is the question I believe Mhenni is asking. This of course does not hold for general matrices but normal ones.

That's not surprising at all since the eigenspace associated with the eigenvalue $\lambda_i$ is the same as the singular vector space associated with the singular value $|\lambda_i|$ and hence the Rayleigh quotients of singular vectors must be equal to the eigenvalues. That is, the Rayleigh quotient associated with the $i$th left (right would work as well) singular vectors gives you the eigenvalue $\lambda_i$. What happens if you compute the Rayleigh quotient $w_i^*Aw_i$? Using (1), we have Now consider the SVD $A=WSV^*$ is given and let $w_i=We_i$ be the $i$th column of $W$. Therefore, the singular vectors are scalar multiples of the eigenvectors. Say, $A\in\mathbb$$ where $D_1$ and $D_2$ are diagonal matrices such that $D_i^*D_i=1$ (that is, the diagonal elements have unit absolute value) and such that $D_1\Lambda D_2^*$ has non-negative diagonal (for simplicity, I assume the eigen/singular values to be distinct). What you say, that is, the singular values are the absolute values of the eigenvalues, makes only sense for normal matrices, e.g., Hermitian ones.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed